What This Guide Covers

Search has changed. Instead of ranking pages in a list, AI systems now retrieve information from multiple sources and assemble it into a single answer. Google’s AI Overviews, ChatGPT, Perplexity, and similar tools don’t just point users to websites, they build responses and cite sources within those responses.

This shift creates a new visibility challenge. Your page can rank well in the traditional index and still remain unseen if the AI system doesn’t select it for the summary. Citation inside the answer has become the new form of exposure.

This guide explains Generative Engine Optimization (GEO), the practice of structuring content so AI systems retrieve, use, and cite it. You’ll learn how these systems work, what signals influence source selection, and how to measure performance when clicks no longer reflect actual reach.

The approach draws on research from Google, Microsoft, OpenAI, and industry studies from Seer Interactive, Search Engine Journal, and Pew Research Center.

Why This Matters Now

AI-generated summaries already affect a significant share of searches. AI Overviews appear on about 21% of Google searches, with much higher presence on informational and question-based queries. When they appear, users often see a generated answer before they see traditional results.

The impact on traffic is substantial. Organic click-through rates drop by up to 61% when AI Overviews appear above traditional results. Paid results show similar declines up to 68%, because ads usually appear below the summary.

Users are adapting too. Research shows that people click fewer links when an AI summary appears. Many treat the summary as the endpoint for early research rather than a starting point for site visits. The cited links act as references for deeper review, not the default next step.

This means strong placement alone no longer predicts traffic. A page can hold a visible position and still lose clicks if the AI summary answers the main question. And a source that never appears in the summary loses exposure entirely, even if it ranks well in the index.

| Metric | Data Point | Source Context |

|---|---|---|

| AI Overview Presence | Appears on ~21% of Google searches | Higher on informational and question-based queries |

| Organic CTR Decline | Up to 61% drop when AI Overviews appear | Users see generated answer before traditional results |

| Paid CTR Decline | Up to 68% drop | Ads typically appear below the AI summary |

| User Behavior Shift | Fewer link clicks when summary is present | Many treat the summary as the research endpoint |

How Generative Search Systems Work

Understanding the mechanics helps you design content that these systems can actually use.

The Retrieval-Augmented Generation Model

Generative search systems separate retrieval from answer creation. They don’t just pull from training data, they search an external document set, select relevant material, and pass it to a language model that builds a response based on those sources.

The retrieval step shapes everything. If the system doesn’t select your content during retrieval, it can’t appear in the answer. Visibility depends on how well your content matches retrieval signals, not just how well it reads.

How Google’s AI Overviews Work

Google explains that AI Overviews use a “query fan-out” method. When a user enters a query, the system runs several related searches across subtopics and viewpoints. It gathers material from different sources and builds a summary that covers the question from multiple angles.

This shifts focus away from a single “best” page. The system looks for useful passages that explain parts of the topic. A page can earn a citation by explaining one aspect well, even if it doesn’t cover the full subject.

| Stage | What Happens | What Gets Filtered Out |

|---|---|---|

| Query Interpretation | System identifies intent, key concepts, and breaks query into related themes | Queries with no clear information need may not trigger summaries |

| Document Retrieval | System searches index for documents matching identified themes | Pages not indexed or not matching themes are excluded |

| Passage Extraction | System pulls specific sections from retrieved documents | Dense or unfocused passages without clear answers get skipped |

| Summary Generation | System combines extracted passages into a structured answer | Material that conflicts or lacks clarity may be excluded from assembly |

| Citation Selection | System assigns source links to parts of the summary | Sources without clear authority signals or structural clarity lose citation placement |

The Five-Step Process

Most generative search systems follow the same sequence:

- Query interpretation. The system identifies user intent and key concepts, breaking a single query into related themes.

- Document retrieval. The system searches an index for documents matching those themes.

- Passage extraction. The system pulls specific sections from those documents, treating each page as a set of passages rather than a single block.

- Summary generation. The system combines extracted material into a structured answer.

- Citation selection. The system assigns source links to parts of the summary to show where information came from.

Each step filters the pool of sources. Your content must match the themes, provide clear passages, and fit into the final answer to gain exposure.

What This Means for Your Content

AI systems evaluate passages, not whole pages. A page can rank well in traditional search and still fail to appear in a summary if its key points are hard to isolate.

Content clarity acts as a technical signal. Systems favor statements that express one idea in direct language. Clear headings, defined terms, and short explanations make it easier for retrieval models to identify useful sections. Dense writing or broad commentary creates friction during extraction.

Structure matters too. When a page separates topics into clear sections, the system can map those sections to parts of the query. Pages that mix many ideas without clear boundaries offer fewer usable units.

Design your content so each section can stand on its own. Each part should answer a specific question or explain a specific concept in a way retrieval systems can recognize.

Citation Is the New Visibility

In generative search, visibility means appearing inside the answer, not just ranking in the index.

How AI Systems Choose Sources

Retrieval systems filter content before building a response. They look for material that matches the query and fits signals tied to reliability and structure.

- Institutional signals. Systems often select domains with established credibility: major publishers, research groups, public agencies, and recognized industry sources. These sites show stable topic coverage, clear authorship, and regular publishing patterns.

- Factual structure. Headings, defined terms, and short statements allow extraction models to isolate useful passages. When a page presents one idea per section, the system can link that section to part of the query.

- Topical focus. Pages that stay within a defined subject area provide stronger matches during retrieval. Broad pages covering many topics give weaker signals about which part matches the query.

- External references. When content points to outside research, standards, or recognized institutions, it signals connection to a wider knowledge base. This can raise the chance of inclusion in the retrieval set.

Research Findings

Academic research on generative retrieval systems shows they favor content from domains with strong institutional traits and from documents that present information in clear, organized formats. Structured content increases the likelihood that passages enter the retrieval pool and appear in summaries.

Retrieval models apply filters before generation. They weigh source traits and document structure along with topical match. Citation outcomes reflect both relevance and how well the source fits system preferences for format and authority.

What to Measure Now

These patterns change your metrics. Ranking position and traffic volume no longer capture full exposure.

Citation frequency. Track how often your sources appear inside AI-generated summaries across a defined set of queries. This shows whether systems retrieve and use your content during answer construction.

Brand presence in summaries. Users see your brand name or domain as part of cited sources even when they don’t click. Repeated appearance across related queries shapes recognition during research.

Traffic as secondary signal. Visits still indicate deeper interest and buying intent, but they reflect only users who move beyond the summary. You can lose visits and still gain reach through consistent citation.

Authority Signals That Drive Selection

Generative search systems select sources based on signals tied to reliability and clarity. These operate at both the domain level and the content level.

Domain-Level Signals

Institutional standing. AI systems favor domains from public agencies, research groups, academic publishers, and established industry outlets. These sites typically publish within defined subject areas, show clear authorship or editorial standards, and update content regularly.

Research backing. Domains that publish or cite studies, surveys, or technical documentation provide material systems can treat as factual input. This requires traceable data, named institutions, and clear methods.

Historical citation presence. When a domain appears often across related queries, the system encounters its material repeatedly during retrieval. Over time, this pattern can increase the chance of entering the candidate set for that topic.

Content-Level Signals

Clear definitions. When a section explains a term or concept in direct language, the system can extract that statement and use it in a summary.

Data inclusion. Tables, figures, and cited statistics give the system concrete elements to reference. Passages with specific values, dates, or sources provide anchors for factual output.

Sectioned structure. Headings, lists, and short paragraphs separate ideas into units the system can treat as candidate passages. Long blocks without clear breaks provide fewer usable segments.

Neutral tone. Writing that explains or reports fits more easily into a summary than promotional or opinion-driven text. AI systems aim to present explanatory content, making neutral passages more likely to appear.

| Signal Level | Signal Type | What It Means for Retrieval |

|---|---|---|

| Domain Level | Institutional Standing | Systems favor public agencies, research groups, academic publishers, established industry outlets |

| Domain Level | Research Backing | Domains publishing or citing studies with traceable data and named institutions provide factual input |

| Domain Level | Historical Citation Presence | Repeated appearance across related queries increases chance of entering candidate set |

| Content Level | Clear Definitions | Direct explanations of terms or concepts can be extracted for summaries |

| Content Level | Data Inclusion | Tables, figures, cited statistics give systems concrete elements to reference |

| Content Level | Sectioned Structure | Headings, lists, short paragraphs create candidate passages for extraction |

| Content Level | Neutral Tone | Explanatory or reporting language fits summaries better than promotional text |

Why This Matters

OpenAI and Microsoft describe retrieval-augmented systems as tools that ground generated answers in external sources to limit errors and support factual output. These systems retrieve content that helps verify statements. They favor material providing clear, verifiable information over material focused on persuasion.

Passages that define terms, report findings, or explain processes offer direct support for generated statements. Promotional framing provides less value during grounding and appears less often in retrieval sets.

Designing Content for Generative Visibility

Generative search systems surface content by selecting and reusing small sections of text. Structure becomes a technical requirement, not just an editorial choice.

Structural Requirements

Clear headings guide retrieval. Systems scan pages for signals that separate one idea from another. A descriptive heading tells the system what the next section covers and helps match it to part of a query.

Direct answers near the start. Many retrieval models focus on early sentences because they often state the main point. When a section opens with a clear claim or definition, the system can extract that line for a summary. Sections beginning with background or narrative delay the main point and reduce extraction value.

Defined terms reduce ambiguity. When a section explains what a term means in plain language, the system can connect that passage to explanation-based queries.

Scoped claims improve fit. A statement that includes a time frame, industry, or condition gives the system a clearer match to specific questions.

These elements work together in a simple pattern: the heading names the topic, the opening sentence states the point, and the following lines add detail or evidence. This creates a clean unit the system can reuse without added interpretation.

Passage-Level Competition

In generative search, paragraphs compete for inclusion. Retrieval systems treat a document as a set of small units. Each unit must match part of the query and provide information fitting a generated response.

Design pages with multiple entry points. A long article can offer many retrieval opportunities if each section stands on its own. A page with one broad block of text offers fewer usable units.

Watch passage length. Very short lines often lack enough context. Very long blocks often mix several ideas. Paragraphs explaining one concept in a few sentences tend to provide enough detail while keeping the idea contained.

Separate sections clearly. When each section addresses a different question or claim, the system can map those sections to different parts of the query. Pages repeating the same point across several sections give fewer distinct options.

This explains why a lower-ranking page can still appear in a summary. A single clear paragraph can match retrieval better than a highly ranked page presenting its key point less directly.

Using Evidence Effectively

Systems favor passages supporting claims with concrete information.

Include sourced data. Figures, dates, and named organizations give the system stable elements to reference. A sentence stating a figure and naming its source provides a clear anchor for factual output.

Reference institutions and reports. Links to research groups, public agencies, or industry bodies signal connection to a wider knowledge base.

Place evidence close to claims. Data should appear near the statement it supports. When a statistic sits far from the claim it explains, the system may extract one without the other.

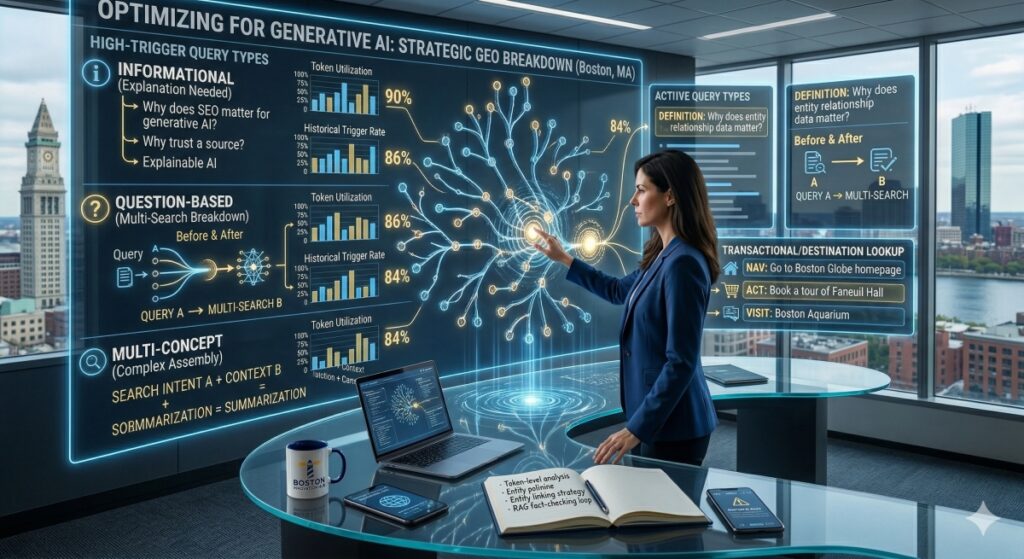

Which Queries Trigger AI Summaries

Generative systems don’t apply summaries evenly. They surface AI-generated answers most often when they detect a need for explanation, synthesis, or context.

High-Trigger Query Types

Informational queries. These seek definitions, explanations, or background, what a concept means, how a process works, why a trend exists. These formats signal that the user needs more than a single fact, so the system gathers material from several sources and builds a structured response.

Question-based queries. Searches beginning with “how,” “what,” or “why” tend to break into multiple sub-questions during interpretation. This gives the system a clear path to run several related searches and combine results into a single answer.

Multi-concept searches. Queries including more than one idea, condition, or comparison, like combining a problem with a context or a solution with a constraint, get interpreted as complex requests requiring material from multiple sources.

Lower-Trigger Query Types

Queries implying a simple lookup or clear destination often return standard results. Navigation searches (looking for a specific site) and straightforward transactional searches (ready to buy a specific product) trigger summaries less often.

| Query Type | Trigger Likelihood | Why |

|---|---|---|

| Informational | High | Seeks definitions, explanations, or background requiring multi-source synthesis |

| Question-Based (how/what/why) | High | Breaks into sub-questions, giving systems a path to run multiple related searches |

| Multi-Concept | High | Combines problem + context or solution + constraint, requiring material from multiple sources |

| Navigational | Low | User looking for a specific site; no synthesis needed |

| Transactional (simple) | Low | Ready-to-buy intent with clear destination; standard results suffice |

What the Data Shows

AI Overviews appear more often on long-form and question-structured queries than on short or navigation-focused searches. Longer queries tend to reflect more specific or layered information needs, increasing the chance of a synthesized answer.

Queries asking for comparisons, step-by-step explanations, or definitions with conditions show higher summary exposure than queries seeking a brand name, location, or product page.

Planning Implications

Transactional keywords still matter for direct response and conversion, but they play a smaller role in generative visibility. Learning-driven queries shape where summaries appear and which sources enter the research phase.

Map your topic areas to the questions buyers ask during early and mid-stage evaluation. This includes queries that frame problems, explore options, or compare approaches. Content built around these queries creates more opportunities for passage-level retrieval and citation.

Coverage focusing only on high-intent, late-stage terms misses much of the space where AI systems construct answers. Coverage addressing learning-driven and multi-concept searches aligns better with how summaries trigger and how sources gain visibility.

Measuring GEO Performance

When systems answer questions directly on the results page, traffic no longer reflects full exposure. Measurement must account for how often your source appears inside summaries and whether that presence carries across a user’s research process.

Metrics That Matter Now

Citation rate per query set. Track how often your sources appear inside AI-generated summaries across a defined group of queries. A rising rate suggests stronger alignment with system signals, even when click volume stays flat.

Brand mention frequency. When an AI system cites your source, users see your brand or domain in the summary. Repeated exposure across related searches builds recognition during the learning phase.

Source persistence across sessions. Some sources appear once then drop out. Others continue appearing as users refine or expand queries. Persistence suggests the system treats your source as a stable reference point.

| Metric | What It Captures | Value for GEO |

|---|---|---|

| Citation Rate Per Query Set | How often sources appear in AI summaries across defined queries | Rising rate = stronger alignment with system signals |

| Brand Mention Frequency | Repeated appearance of brand/domain in summaries across related searches | Builds recognition during learning phase even without clicks |

| Source Persistence | Whether your source continues appearing as users refine queries | Suggests system treats source as stable reference point |

| Rank Tracking | Position in traditional index | Limited — doesn’t show whether system retrieves or cites the source |

| Raw Click Volume | Visits from search results | Loses context — drops may reflect summary presence, not reduced relevance |

Metrics That Lose Value

Rank tracking offers limited insight. A page can rank well and still fail to appear in a summary. Rankings show index position, not whether a system retrieves or cites the source.

Raw click volume loses context. Clicks now represent only users who move beyond the summary. A session drop may reflect summary presence rather than reduced relevance. Without citation data, you can’t tell whether the system ignored your content or displayed it without a click.

These measures still matter for late-stage evaluation and conversion. They no longer describe full reach at the research stage.

Attribution Challenges

Multi-source summaries complicate tracking. A single answer can cite several domains. Users may read the summary and return later through branded search or direct visits. Standard models often credit only the last step and miss early exposure.

Use assisted conversions, branded search trends, and direct traffic patterns to infer whether summary presence leads to later engagement. These remain indirect but provide more context than click-based models alone.

Building Your Dashboard

Effective GEO reporting combines system-level and site-level data. Track summary presence, citation count, and source persistence for each query, then compare with branded search volume, direct visits, and assisted conversions.

Separate three layers:

Exposure inside summaries. Where and how often does your content appear in AI-generated answers?

Downstream engagement. What happens on your site after exposure?

Commercial outcomes. How does this connect to leads, opportunities, or revenue?

This structure helps explain gaps. High citation with low engagement may signal content that informs but doesn’t prompt action. Low citation with stable rankings may point to structure or evidence issues limiting retrieval.

Current Limitations

Platforms don’t yet provide direct reporting on summary impressions or citation counts. You must rely on sampling or third-party tools, which limits scale and accuracy. Even with these constraints, tracking citation behavior offers a clearer view of generative visibility than rankings and sessions alone.

Risks and Constraints

Generative visibility depends on platforms you don’t control. This creates limits in both measurement and influence.

Platform Dependency

Google Search Console doesn’t report AI Overview citations or summary impressions. You can’t see how often your source appears inside an AI-generated answer or which passages the system selects. Most GEO tracking relies on manual sampling or third-party tools that scan results pages, methods that introduce gaps and don’t scale well across large keyword sets.

Source Selection Bias

Research shows retrieval systems favor domains with strong institutional signals and clear structure. This limits visibility for smaller publishers, niche experts, and new domains lacking an established footprint. Even when these sources offer high-quality information, AI systems may filter them out because they don’t match expected authority signals.

This can concentrate exposure among a small group of large publishers and reduce diversity in cited sources.

Regulatory Uncertainty

Regulators and publishers have raised concerns about how AI systems use and cite content. Ongoing scrutiny in the European Union questions whether generative search features provide fair visibility and compensation to content owners. These discussions may lead to changes in how platforms display sources, manage licensing, or limit summary features in certain regions, altering GEO dynamics without notice.

Technical Boundaries

Generative systems rely on indexed content. Material behind paywalls, logins, or restricted formats often remains invisible. Retrieval models may also favor recent or frequently cited material, disadvantaging slower-moving fields or long-form research that updates less often.

Plan with the understanding that you can adjust content and structure, but you can’t fully control how platforms retrieve and present sources. Avoid treating citation presence as a guaranteed outcome.

Where GEO Is Headed

This section presents forward-looking analysis based on current behavior and research trends, not confirmed platform plans.

Current Trajectory

AI-generated summaries continue expanding across more query types. Early deployment focused on general and educational searches. Recent patterns show growing presence in technical, health, and business-related queries.

Systems also show increased use of structured data sources, datasets, schema markup, and formal knowledge bases. This allows retrieval models to verify claims and attach citations with greater precision.

Broader Adoption

Retrieval-augmented generation models now appear in enterprise search, customer support tools, and internal knowledge systems. Companies deploy similar architectures to help employees search large document sets. This suggests GEO principles apply beyond public web search.

Commercial search platforms also experiment with tighter links between product data, reviews, and generative answers, potentially leading to hybrid systems combining factual summaries with structured commercial information.

Speculative Possibilities

Some researchers suggest search engines may introduce citation-based ranking layers, where systems weigh how often a source appears in summaries across related queries as a signal of authority.

Another possibility involves paid visibility inside summaries, allowing advertisers to place sponsored references within AI-generated answers, similar to current ad placements. If this occurs, you’d need to separate earned citation from paid inclusion in reporting.

These remain speculative. Treat them as planning inputs rather than settled direction.

GEO Readiness Framework

Preparing for generative visibility requires structured evaluation of how your domain and content interact with retrieval systems.

Authority Footprint

Review how often your domain appears as a cited source across your topic area. Track presence in summaries for learning-driven queries and compare citation patterns with major competitors and institutional sources.

Ask: Are you being selected as a reference in your space, or are other sources capturing that visibility?

Content Structure Quality

Audit your content for retrieval-friendly structure:

- Clear headings that name specific topics

- Direct opening statements that state the main point

- Defined sections that each address one concept or question

- Passages that can stand alone without requiring full-page context

Ask: Can a retrieval system isolate useful passages from your pages, or do key points get buried in dense blocks?

Citation Presence Analysis

Identify which sections of your content earn citations and which never appear. This helps isolate where structure, evidence, or clarity may limit selection.

Ask: Which parts of your content actually show up in AI summaries? Which parts consistently get passed over?

Evidence and Research Depth

Content with sourced figures, named institutions, and dated references offers stronger grounding signals. Audit whether key claims link to recognized research bodies or formal reports.

Ask: Does your content provide the kind of verifiable, concrete information that systems use to ground their answers?

Operational Practices

Run periodic GEO audits. Review a keyword set to record summary presence, citation sources, and section-level matches. Create a baseline for tracking change over time.

Track by query and intent. Group queries by type and topic to identify where generative systems surface summaries versus where traditional listings dominate.

Analyze competitive sources. Review which domains appear alongside or instead of yours. This reveals how the system defines authority within your topic and guides content scope and evidence choices.

Treat GEO as ongoing system review. Revisit structure, evidence, and authority signals as platform behavior changes.

Key Takeaways

Generative search systems have changed what visibility means. Retrieval and citation now shape exposure more than list position. Systems assemble answers from selected passages and present a small set of sources at the top of the page.

Citation is the new visibility unit. A source appearing inside the answer gains exposure even when users don’t visit the site. A source ranking well but never appearing in summaries remains unseen.

Authority, structure, and extractable facts drive selection. Domains with clear institutional signals and content with defined sections, direct claims, and sourced data appear more often in summaries.

Design for passage-level retrieval. Pages built so each section can stand on its own perform better than pages built only for full-page reading.

Measurement must evolve. Citation frequency, brand presence in summaries, and source persistence provide a closer view of reach than rankings and session counts alone. Traditional metrics still signal engagement and conversion, they just no longer capture early-stage exposure.

Plan for learning-driven queries. Informational, question-based, and multi-concept searches trigger summaries most often. Coverage addressing these queries creates more retrieval and citation opportunities than late-stage transactional terms alone.

Accept platform dependency. You can adjust content and structure, but you can’t fully control how platforms retrieve and present sources. Build for visibility while acknowledging the limits of influence.

As generative systems expand across public and enterprise search, this approach will shape how organizations manage presence, credibility, and reach in AI-driven discovery.

FAQs

GEO (Generative Engine Optimization) is the practice of optimizing content so it gets surfaced and cited by AI-powered search systems like ChatGPT, Perplexity, and Google’s AI Overviews. Unlike traditional SEO, which focuses on ranking web pages in a list of blue links, GEO focuses on structuring content so generative systems can extract, summarize, and reference it in their responses.

B2B buyers increasingly use AI-powered tools to research solutions, compare vendors, and answer technical questions. If your content isn’t structured for generative retrieval, it risks being invisible in these new search experiences — even if it ranks well in traditional search results. Early adoption gives B2B companies a competitive advantage as this shift accelerates.

GEO doesn’t replace SEO — it builds on it. Traditional SEO fundamentals like quality content, topical authority, and technical site health still matter. GEO adds a structural layer on top, ensuring your content is formatted in a way that AI systems can easily extract and cite. Think of GEO as an evolution, not a replacement.

There are four key structural requirements: use clear, descriptive headings that signal what each section covers; place direct answers or key claims in the opening sentence of each section; define terms in plain language to match explanation-based queries; and scope your claims with specific time frames, industries, or conditions to improve match accuracy with user questions.

Generative retrieval models often prioritize early sentences in a section because they typically contain the core claim or definition. When a section opens with background, narrative, or context-setting language, the main point gets buried — reducing the likelihood that the AI system will extract and surface it in its response.

Audit your content against a simple pattern: does each section’s heading clearly name the topic? Does the first sentence state the point directly? Do the following lines provide supporting evidence or detail? If your sections begin with vague introductions, lack defined terms, or make broad claims without specific conditions, they likely need restructuring for generative visibility.

Measuring GEO performance is still evolving, but you can start by monitoring referral traffic from AI platforms, tracking brand mentions in AI-generated responses using tools built for this purpose, and testing how your key topics appear in systems like ChatGPT, Perplexity, and Google AI Overviews. As the space matures, expect more dedicated analytics tools to emerge for tracking generative visibility.

Resources

- Google Search Central – AI Overviews Documentationhttps://developers.google.com/search/docs/appearance/ai-overviews

- Seer Interactive – AI Overviews CTR Impact Researchhttps://www.seerinteractive.com/insights/aio-impact-on-google-ctr-september-2025-update

- Search Engine Journal – AI Overviews Appearance Datahttps://www.searchenginejournal.com/google-ai-overviews-appear-on-21-of-searches-new-data/560471/

- Microsoft Research – LLM Retrieval and Searchhttps://www.microsoft.com/en-us/research/publication/retrieval-augmented-generation-for-knowledge-intensive-nlp-tasks/

- OpenAI – Retrieval-Augmented Generation and System Designhttps://openai.com/research