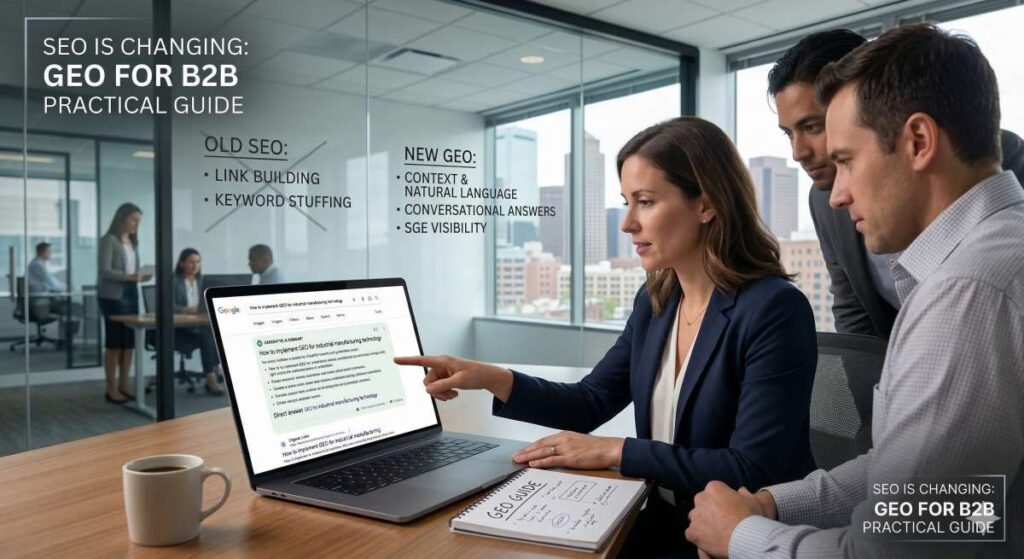

Generative search systems are changing visibility by answering questions on results pages and citing selected sources within those answers. Google states that its AI Overviews provide explanations with links to multiple sources, rather than only presenting a ranked list (Google Search Central, 2025). This design changes how users search and affects brand visibility.

Traditional search engine optimization (SEO) metrics track rankings, clicks, and sessions. These measures describe navigation to websites. They do not measure influence when users read an on-page answer without visiting a site.

Industry data shows more zero-click searches alongside lower click-through rates, reducing the link between ranking position and traffic. Visibility now depends on whether a page supplies content that the system can retrieve and cite in a generated answer, not only on where the page appears in a list.

This report analyzes how generative search systems retrieve, evaluate, and assemble content into answers. It presents a practical framework for generative engine optimization (GEO) that explains retrieval, citation, and content design.

“Retrieval” means the system’s process for finding candidate pages or passages. “Citation” means the system’s selection of specific sources to link within the answer. “Content design” means how a page presents information so the system can extract and use it accurately. The analysis uses platform guidance, industry research, and observed behavior in AI Overviews and similar features.

The report provides B2B teams with a clear explanation of generative visibility, guidance on adapting existing SEO programs for AI search, and a checklist for preparing websites for generative retrieval and citation.

Introduction

SEO programs were built around stable, predictable user behavior: users entered a query, scanned a ranked list, and selected a result to visit. Performance measurement followed this pattern. Ranking position, click-through rate, and session volume mattered because they described how search systems delivered information and how users accessed it.

| Dimension | Traditional SEO | Generative Search (GEO) |

|---|---|---|

| Retrieval Unit | Full web pages / documents | Passages, entities, and concepts |

| Matching Method | Keyword relevance + backlinks | Semantic similarity (meaning-based) |

| Results Format | Ranked list of blue links | Synthesized answer with cited sources |

| User Action | Click a link to access information | Read answer on the results page |

| Competitive Model | Pages compete for rank position | Pages compete for citation inclusion |

| Authority Signal | Domain authority + inbound links | Explanatory clarity + cross-source consistency |

| Content Scope | Narrow pages targeting specific keywords | Broader explanations supporting many queries |

Generative answers disrupt this model by changing where information appears and how users consume it. AI Overviews and similar features respond directly on results pages, often giving users enough information to continue research without opening a website. Visibility increasingly occurs through inclusion in generated answers rather than through page visits alone.

Observed behavior supports this shift. Seer Interactive reports that organic click-through rate dropped from 1.41% to 0.64% for queries displaying AI Overviews, even when a ranked list still appeared on the page (Seer Interactive, 2025). Presence on the results page no longer reliably leads to interaction.

Zero-click behavior shows the same pattern. Search Engine Land reports that 27.2% of U.S. searches ended without a click in March 2025, up from 24.4% one year earlier (Search Engine Land, 2025). More users complete tasks within the search interface, reducing session counts while exposure to brand names and content excerpts continues.

These conditions complicate how B2B teams interpret performance. Rankings may hold steady and content output may rise, yet traffic declines because users satisfy research needs before reaching a site. This pattern does not indicate reduced relevance or weaker demand. It follows directly from how search systems now deliver information.

AI Overviews also change competitive visibility. A single generated answer can include several sources, presenting brand names and explanations together rather than in strict order. Inclusion carries weight comparable to ranking position. A page that supports part of an answer gains exposure even when it ranks below other results.

This environment challenges earlier measurement assumptions. Rankings describe placement in a list and clicks describe navigation behavior, but neither explains influence on generated answers. B2B SEO programs need a framework that explains how content reaches users before any site visit occurs.

The sections that follow examine how retrieval behavior has changed, how generative search systems select and assemble content, and how teams can adjust content strategy and measurement to align with these systems.

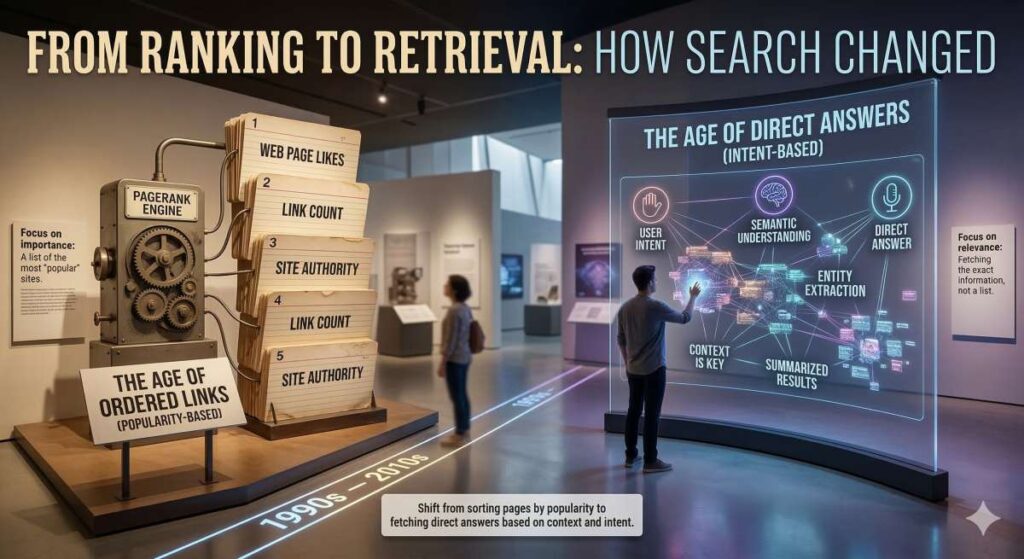

From Ranking to Retrieval: How Search Changed

Traditional search systems retrieve documents. They evaluate pages based on keyword relevance, links, and authority signals, then present results as a ranked list. Users decide which link to open, so visibility moves from ranking position to site visit.

Generative search systems retrieve meaning rather than documents. They evaluate passages, entities, and concepts across multiple sources simultaneously. Instead of sending users to a single page, these systems assemble an explanation that appears directly on results pages, with links shown as supporting sources.

AI Overviews combine information from multiple sources and present it directly in search results to help users understand a topic quickly (Google Search Central, 2025). This design treats search as an answer system instead of a navigation tool. Links still allow deeper exploration, but they no longer deliver the main explanation.

Retrieval under this model relies on semantic similarity. OpenAI describes retrieval as matching the meaning of a query to the meaning of stored content, even when the words used do not closely match (OpenAI, n.d.). This method lets systems connect different phrasings to the same idea.

Generative search changes how content competes for visibility. Pages no longer compete only on keyword usage but also on explanatory usefulness. AI-driven search systems assess whether content provides clear definitions, shows how ideas relate, and gives examples that support an answer. A page that explains one part of a topic can appear next to other sources that explain other parts.

Navigation still plays a role in some cases. Users who search for a specific site or action depend on links. For many informational and research queries, AI systems prioritize explanation. They present answers first and place navigation options second.

This retrieval model also affects scale. A single page that explains a topic clearly can support many different questions. A page written narrowly for one phrase supports fewer retrieval paths. Broader coverage and clear structure increase how often search systems can reuse content.

This context explains why later sections focus on retrieval and synthesis alongside ranking. Ranking still exists, but retrieval and answer assembly now influence visibility earlier in the search process. B2B SEO programs need to consider both models to accurately interpret performance.

How Generative Search Systems Retrieve and Use Content

Generative search systems rely on a retrieval process that differs from traditional keyword-based search. Instead of matching queries to pages based on exact terms and backlinks, these systems retrieve and assemble information using semantic similarity. This change affects which content appears in AI-generated answers and how content is used.

Large language model systems retrieve information by comparing the meaning of a query to the meaning of stored text segments. OpenAI documentation describes retrieval as a semantic process that surfaces relevant content even when the query and source text share few or no keywords (OpenAI, n.d.). This approach allows systems to connect questions with explanations, definitions, or examples that express the same idea using different language. Keyword overlap plays a smaller role in determining whether content enters the retrieval set.

| Quality | What It Means | What Weakens It | Source |

|---|---|---|---|

| Clarity | Defines terms directly, explains relationships, answers questions in plain language | Ambiguous phrasing, dense jargon, indirect explanations | Google Search Central, 2023 |

| Coverage | Addresses a topic fully within its scope — what, why, and how | Omitting key steps or assumptions; partial explanations | Google Search Central, 2025 |

| Consistency | Multiple independent pages describe the same concept in similar terms | Unusual phrasing, unsupported claims, shifting definitions | OpenAI Retrieval Docs |

Generative retrieval operates at the level of text segments rather than full pages. AI-driven search systems divide content into chunks, often paragraphs or short sections, and evaluate each chunk independently. A single page may contribute multiple chunks, only one chunk, or none at all. Overall page ranking carries less influence in generative retrieval than the clarity and relevance of individual sections. A long page with mixed topics can perform worse than a shorter page where each section clearly addresses a specific concept.

Once retrieved, content undergoes weighting before it appears in a generative search response. Weighting determines which chunks influence the final answer and how much influence each chunk carries. Google states that its AI systems aim to retrieve content that helps users understand a topic, rather than content designed primarily to rank in search results (Google Search Central, 2023). Clarity and explanatory value matter more than optimization patterns such as keyword repetition or exact-match headings.

Three qualities consistently affect how AI systems weigh retrieved content:

Clarity

Chunks that define terms directly, explain relationships, or answer questions in plain language are easier for models to reuse. Ambiguous phrasing, dense jargon, or indirect explanations are not used because AI systems cannot extract a clear answer.

Coverage

AI systems favor content that addresses a topic fully within its scope. A definition that explains what something is, why it matters, and how it works carries more weight than a partial explanation. Coverage does not mean length; it means completeness relative to the question being answered. Content that omits key steps or assumptions receives less weight because the system must fill gaps using other sources.

Consistency

When multiple independent pages describe the same concept in similar terms, AI systems gain confidence in that information. OpenAI documentation explains that retrieval systems can surface multiple semantically related results, which allows the model to compare and synthesize information rather than rely on a single source (OpenAI, n.d.). Consistent explanations across sites increase the likelihood that a given framing appears in the final output.

This retrieval and weighting process changes how authority works. Authority no longer depends only on domain-level signals or inbound links. It also depends on whether content aligns with how a topic is commonly explained across the web. Pages that introduce unusual phrasing, unsupported claims, or narrow interpretations struggle to appear because they do not match the broader semantic pattern.

Assembly is the final step. After weighting, the generative search system combines selected chunks into a synthesized answer. This answer may draw from several sources, each contributing a sentence or concept. Google confirms that its AI-powered search experiences aim to show a range of sources and encourage users to explore content on the web, rather than direct them to a single page (Google Search Central, 2025). Inclusion matters even when a page does not rank first or receive a click.

For B2B content teams, this model has practical consequences. Content must stand on its own at the section level. Each paragraph should answer a clear question or explain a clear concept. Pages that rely on surrounding context, internal links, or implied meaning perform poorly in retrieval systems because chunks lose that context when evaluated independently.

Generative search systems also reward stability. Content that changes meaning across updates or contradicts related pages reduces consistency signals. Clear definitions, steady terminology, and repeatable explanations improve retrieval outcomes over time.

Understanding retrieval, weighting, and assembly provides a working mental model for GEO. Visibility depends less on where a page ranks and more on whether its content segments clearly explain a topic in a way that aligns with how other trusted sources describe it. This explains why many traditional SEO tactics no longer predict results and why content design now plays a central role in generative visibility.

AI Overviews and Generative Results in Practice

AI Overviews change how information appears on search results pages and how users interact with it. For many informational queries, Google displays an AI-generated explanation above traditional listings, with links to cited sources included in the answer. Users can read the explanation directly on the results page, changing how organic listings function.

Google states that these AI-powered search experiences aim to help users quickly understand a topic and then explore supporting sources for more details (Google Search Central, 2025). The results page now delivers part of the explanation that websites previously provided on their own pages. For early research and basic questions, users often receive enough context from the Overview to continue without visiting a site.

| Metric | Without AI Overview | With AI Overview | Change | Source |

|---|---|---|---|---|

| Organic CTR (all results) | 1.41% | 0.64% | −54.6% | Seer Interactive, 2025 |

| Organic CTR (brand cited in AIO) | 0.74% | 1.02% | +37.8% | Seer Interactive, 2025 |

| Paid CTR (brand cited in AIO) | 7.89% | 11.00% | +39.4% | Seer Interactive, 2025 |

| Zero-click searches (U.S.) | 24.4% (Mar 2024) | 27.2% (Mar 2025) | +2.8 pts | Search Engine Land, 2025 |

| Position #1 organic CTR drop | Baseline | −34.5% when AIO present | −34.5% | Search Engine Land, 2025 |

Observed behavior aligns with this structure. Seer Interactive reports that organic click-through rate declined from 1.41% to 0.64% on queries where AI Overviews appeared, even when pages still remained visible on the results page (Seer Interactive, 2025). Top ranking alone no longer leads to interaction when the answer appears before the listings.

Citations within an Overview affect outcomes for individual brands. Seer Interactive found that organic click-through rate increased from 0.74% to 1.02% when a brand appeared in an AI Overview, compared with appearing only in traditional results (Seer Interactive, 2025). Inclusion in the explanation produces higher engagement than exclusion, even as overall click volume declines.

Paid search shows a similar pattern. Seer Interactive reports that paid click-through rate increased from 7.89% to 11% when a brand appeared in an AI Overview (Seer Interactive, 2025). Users who click after reading an Overview often act with more context, responding to sources that already contributed to their understanding.

These results clarify how AI Overviews affect traffic and visibility. Overviews reduce the need to click for definitions, comparisons, and high-level explanations. At the same time, they direct attention to sources that support the explanation itself. Visibility increasingly depends on whether content appears in the generated answer, not only on where it ranks in a list.

For B2B teams, this behavior changes how success should be evaluated. A page can lose sessions while still influencing research by appearing across multiple Overviews. Rank position and session counts alone do not capture this effect. In generative search, citation presence provides a more accurate signal of visibility than traffic itself.

Why Keyword-Centric SEO Breaks in Generative Search

Keyword targeting once offered a reliable way to estimate search visibility. Pages that closely matched query terms and ranked well often captured traffic because users relied on ranked links to find information. Generative search weakens this relationship. AI-powered search systems that generate answers select content based on whether it explains a question clearly, not on keyword matching alone.

| Factor | Keyword-Centric SEO | Topic-Based GEO |

|---|---|---|

| Targeting approach | One page per keyword phrase | One page covering a topic across related queries |

| Matching mechanism | Exact and close-match keyword alignment | Semantic meaning and explanatory depth |

| Content scope | Narrow; optimized for one phrase | Broad; definitions, steps, comparisons, limits |

| Role of keywords | Direct optimization target | Demand signal for topic planning |

| Structured markup impact | HowTo / FAQ rich results drove visibility | Rich results reduced; clear prose matters more |

| Language variation | Disadvantage — dilutes keyword density | Advantage — semantic retrieval connects varied phrasing |

| Unit of value | Query match → rank → click | Answer quality → retrieval → citation |

Keywords describe what users type. They do not describe what an answer requires. A query signals intent, but an adequate answer often includes definitions, process steps, comparisons, limits, and background. Generative search systems assemble explanations from content that covers these elements. Pages that repeat query terms without explaining the subject in full contribute little to generated answers.

Clear topic coverage now carries more weight than narrow phrase targeting. Content that explains a subject across related aspects can support many different queries, even when it does not target each one directly. Pages written around a single phrase often lack the range needed for synthesis, which limits their use in AI-generated answers. Exact-match optimization has less influence on generative results.

Changes to Google’s search features support this pattern. In 2023, Google announced that HowTo rich results would no longer appear in desktop search results and that FAQ rich results would primarily appear on authoritative government and health sites (Google Search Central, 2023). These updates reduced how often structured formats appear in results, even when pages continue to rank, showing that structured markup alone no longer guarantees prominent display.

Click behavior reinforces the same conclusion. Search Engine Land reports that the click-through rate for the first organic result dropped by 34.5% when AI Overviews appeared, based on Ahrefs data across approximately 300,000 keywords (Search Engine Land, 2025). Ranking first no longer ensures interaction when the system satisfies the query before users reach organic listings.

These conditions change how SEO teams should interpret performance. A page can rank well and still reach fewer users if generative answers rely on other sources. At the same time, a page can appear within generated answers without holding the top organic position. Keyword reports track rank and traffic, but they do not capture contribution to generated explanations.

Exact phrasing also carries less influence. Because generative retrieval relies on meaning rather than string matching, content that explains concepts using varied language can perform well. This reduces the advantage of precise keyword alignment and increases the value of clear explanation.

Keywords still matter as planning inputs. They help understand demand and group related questions. Their role moves away from direct optimization targets and toward topic planning signals. SEO teams identify questions through keywords and then design content that answers those questions fully.

Keyword-centered SEO breaks down in generative search because it measures the wrong unit of value. Queries remain important, but answers now determine visibility. Content gains exposure when it explains a topic in a form that systems can retrieve, compare, and reuse across related questions.

Entities, Topics, and Authority in GEO

GEO depends on how AI-driven search systems identify and connect entities across content. Entities include identifiable things: people, companies, products, standards, and abstract concepts. In generative search, entities provide systems with a stable structure for understanding topics and assembling answers from multiple sources. These references connect questions to explanations.

| Entity Strategy Element | Description | Impact on GEO Visibility |

|---|---|---|

| Clear entity naming | Each product, standard, or concept has one stable name and definition | AI systems connect content to related questions even with different phrasing |

| Relationship mapping | Content explains how entities relate — workflows, dependencies, limits | Supports answers involving steps, processes, and multi-concept queries |

| Topic clusters | Groups of pages covering a core concept from multiple angles | Systems associate the site with the topic as a whole |

| Consistent terminology | Same terms across guides, product pages, and documentation | Reinforces consistency signals across retrieval results |

| E-E-A-T alignment | Content reflects experience, expertise, and real-world constraints | Helps search systems judge whether content supports user understanding |

Generative systems retrieve meaning without relying on exact wording. When content names entities clearly and explains what they represent, AI-driven search systems can connect that content to related questions even when users phrase those questions differently. A page that defines a concept, explains its role, and links it to related ideas gives AI systems material they can reuse across answers.

GEO places value on explaining how entities relate to one another. Generative systems look for signals that show how concepts connect. A page may explain how a product fits into a workflow, how a standard applies to a process, or how a regulation affects an industry. These relationships allow systems to answer questions that involve steps, limits, or dependencies. Content that lists facts without explaining how those facts connect supports fewer explanations.

Google’s published guidance aligns with this approach. Google says that its ranking system aims to surface original, high-quality, people-first content and evaluate qualities associated with experience, expertise, and trust (Google Search Central, 2023). Content reflects audience understanding when it explains how ideas work together within a topic. It shows experience when explanations reflect real use, constraints, or outcomes. These qualities help search systems judge whether content can support user understanding.

Topical authority develops through consistent coverage of related entities over time. Authority builds when a site explains a topic from several angles using stable definitions and consistent language. Pages that cover requirements, processes, risks, and outcomes reinforce one another because they repeat shared entities in the same way. This consistency helps search systems associate the site with the topic as a whole.

Industry analysis reinforces the need for this structure. Seer Interactive reports that AI-driven search systems provide limited query-level reporting and send less measurable traffic, which reduces the usefulness of traditional keyword-based metrics (Seer Interactive, 2024). As measurement becomes less direct, consistent topic coverage becomes more important for visibility.

This shift affects how content teams plan strategy. Content teams benefit from building topic clusters around core entities. Each cluster defines the main concept, explains related concepts, and answers common questions using shared terms. Over time, this structure helps systems recognize the site as a reliable source on the topic.

In generative search, authority comes from clear explanations of entities and their relationships. Keyword frequency alone carries little weight. Search systems respond to consistent coverage, clear definitions, and stable terminology that they can retrieve, compare, and reuse across many answers.

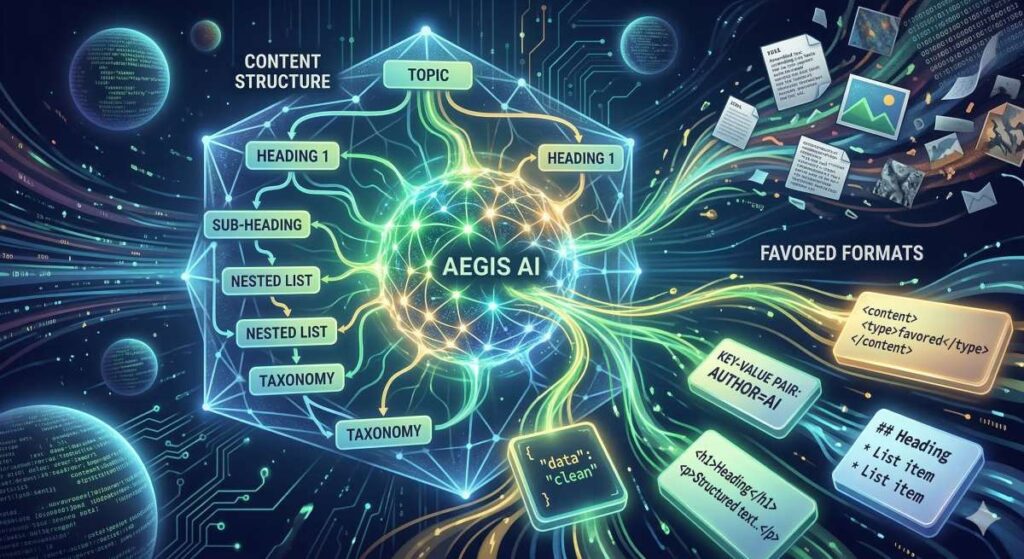

Content Structure and Formats Favored By AI Systems

Generative search systems pull information from content at the section level. Structure affects whether systems can extract, compare, and reuse that information. Pages written for clear answers work better than pages written mainly to persuade or guide scrolling.

Google advises creating content that makes answers easy to identify and cite in AI-powered search experiences (Google Search Central, 2025). AI systems need clear boundaries. They must recognize where an answer starts and where it ends. Clear headings set those boundaries. Placing a direct answer immediately after a heading reduces guesswork during retrieval.

Headings work best when they contain a question or a concept directly. A heading such as “What Is SOC 2?” tells the AI system exactly what the following section explains. The system can then match that heading to similar questions and treat the paragraph below it as a candidate answer. Headings that rely on wordplay or implied meaning make retrieval harder because the AI system has to infer intent.

| Structure Element | Best Practice | Why It Matters for GEO |

|---|---|---|

| Headings | Use a question or clear concept (e.g., “What Is SOC 2?”) | AI systems match headings to queries and treat the section below as a candidate answer |

| Section length | Keep paragraphs focused on one idea per section | Systems combine passages from multiple sources; clean single-idea chunks reduce filtering errors |

| Definitions | Place definitions near the top of each section | Retrieval systems evaluate sections independently; standalone definitions increase reuse |

| Examples | Place examples immediately after definitions | Models align related passages more easily during synthesis (OpenAI) |

| Cross-page consistency | Use similar patterns for headings, definitions, and examples across related pages | Predictable structures improve AI confidence during retrieval and citation |

| Attribution clarity | Direct claims followed by brief explanations | AI systems cite sources when they can attribute a statement to a specific section |

Short sections support reuse. Generative search systems often combine passages from several sources to form one response. Paragraphs that focus on one idea give the system clean input. When a section mixes several ideas, the system has to filter, which lowers accuracy.

Clear definitions increase reuse across questions. When a section defines a term directly, AI systems can apply that definition in many contexts. Definitions that depend on surrounding text lose value because retrieval systems evaluate sections on their own. Placing definitions near the top of a section improves alignment with how systems retrieve content.

Examples add value when they follow definitions closely. An example shows how a concept applies in practice, which helps search systems compare explanations across sources. OpenAI documentation explains that well-structured text improves retrieval quality because models can align related passages more easily during synthesis (OpenAI, n.d.). Examples that stay focused on the defined concept strengthen that alignment.

Consistency across pages also matters. When related pages follow similar patterns for headings, definitions, and examples, generative search systems encounter predictable structures. Predictability improves confidence during retrieval and citation.

Clear structure also supports citation. AI systems cite sources when they can attribute a statement to a specific section. Direct claims followed by brief explanations make attribution easier than dense narrative text.

For B2B teams, this leads to practical design choices. Pages should answer a limited set of related questions. Each section should define terms, explain relationships, and use steady language. Structure that supports extraction and reuse improves visibility within generated answers as generative search expands.

Ranking vs Citation: Two Different Visibility Models

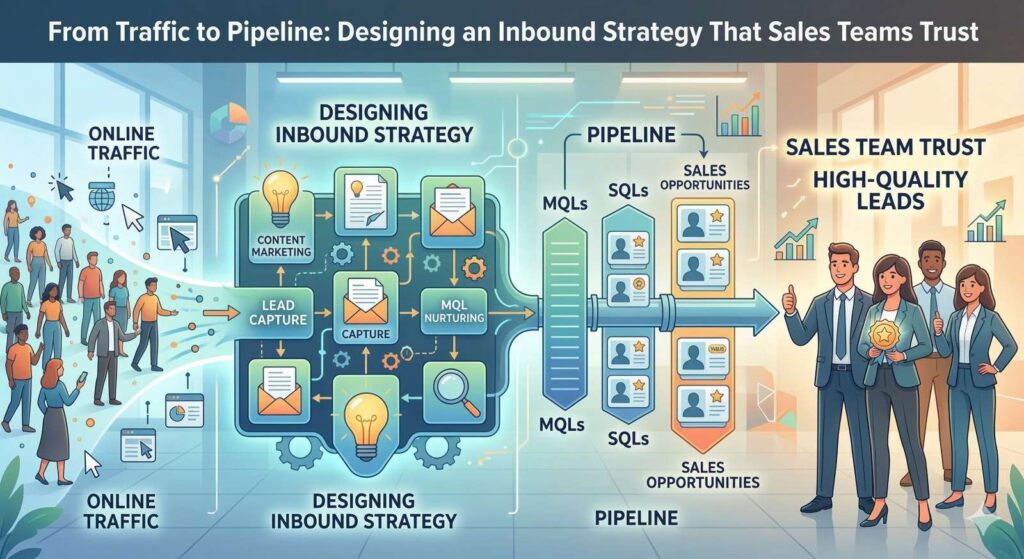

Search visibility now follows two different models. One model relies on ranking. The other relies on citation. This difference explains why traditional SEO metrics no longer describe performance well in generative search.

Ranking measures where a page appears in a list of results. Pages compete for placement based on relevance, authority, and technical signals. Higher placement increases the chance that a user sees a link and clicks it. This model assumes that visibility leads to visits and that visits signal success. For many years, this assumption matched how users used search.

Citation works differently. Generative systems answer questions directly on results pages and reference sources that support specific parts of the explanation. Visibility comes from appearing within the generated answer, not from position in a ranked list. A cited source may appear in a paragraph or list even if it does not rank first or receive a click.

Both models operate simultaneously. A page can rank well and still contribute little if the search system builds the explanation from other sources. A page can rank lower and still influence understanding if it explains one part of the topic clearly. This separation explains why stable rankings no longer lead to stable traffic.

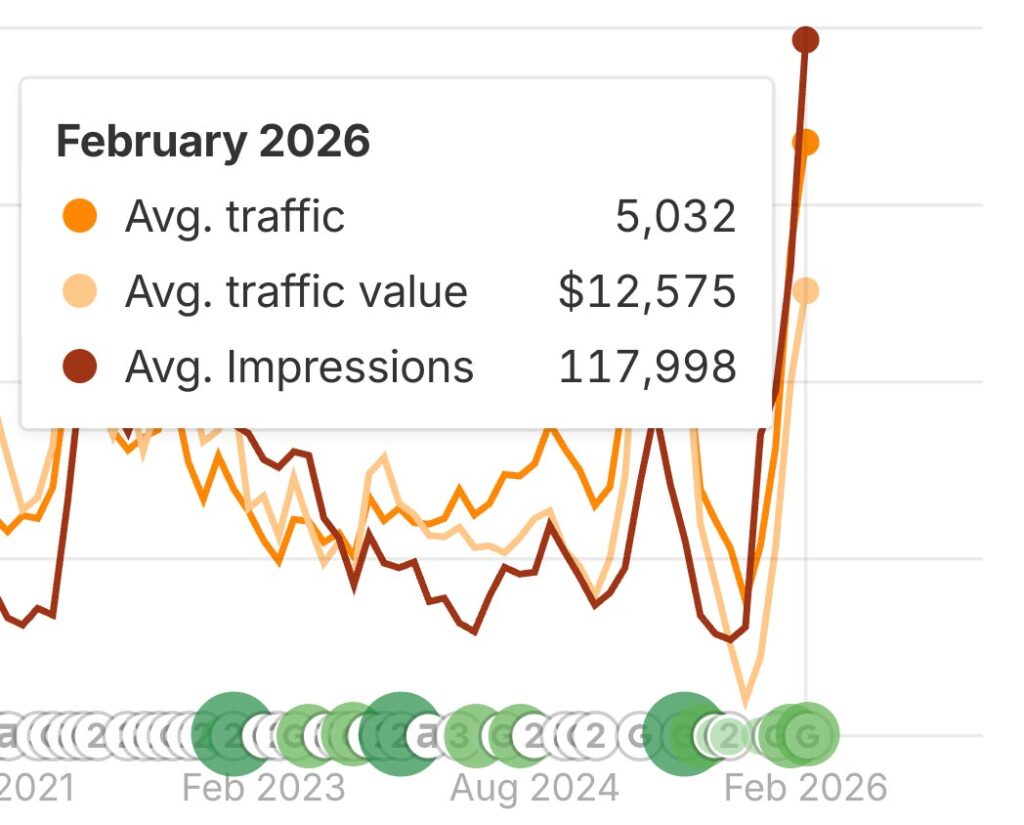

BrightEdge data illustrates this pattern. BrightEdge reports that search impressions rose by 49% year over year while clicks fell by 30% across enterprise sites, based on data reported by Search Engine Land (Search Engine Land, 2025). Users see content more often in search results, but they click less frequently. Exposure increases even as recorded interaction declines.

In generative results, exposure often happens without a visit. Users read the answer, notice the cited sources, and continue research. The cited page influences understanding even though analytics show no session. Ranking metrics capture placement, but not this type of contribution.

Citation-based visibility aligns with how generative search systems operate. The system selects sources that explain specific parts of an answer and combines several sources to cover different aspects of a question. Each citation shows that the system relied on that source for a defined statement.

This model changes competition. Pages no longer compete only for position. They compete for inclusion. Several competitors can appear together in one generated answer. In this setting, absence matters more than small changes in rank. A page that does not appear in generated answers loses exposure during research, even if it ranks on the first page.

For B2B teams, this distinction affects strategy and measurement. Ranking still matters for navigational and transactional searches. Citation matters more for research and explanatory searches. Tracking both gives a clearer view of visibility as generative search continues to grow.

Measuring GEO Performance Without Clicks

Generative search changes how performance should be measured. When search systems answer questions directly on results pages, many interactions end without a site visit. Clicks no longer show whether content shaped understanding or influenced decisions. Measurement needs to focus on signals that show presence and contribution to generated answers.

Citation presence provides a practical starting point. When an AI Overview or similar result references a source, the system signals that it relied on that content for a specific claim. Each citation shows contribution, even if no click occurs. Tracking how often a brand or page appears as a cited source across related queries helps teams understand topic-level visibility when session counts remain flat.

Branded mentions in generated answers offer a related signal. Some generative search systems name companies or products directly within explanations. Users see these names during research without visiting a site. Tracking how often a brand appears in generated responses helps explain awareness and familiarity that traditional analytics cannot capture.

Influence in generative search often spreads across several sources. A single page may support one part of an answer while other pages support the rest. No single source owns the outcome. Influence builds through repeated exposure. Teams can assess this assisted influence by reviewing which pages appear together in generated answers and whether those appearances align with later actions such as branded searches, demo requests, or sales conversations.

Industry analysis supports the need for this shift in measurement. Seer Interactive explains that click-through rate alone no longer represents search performance because AI-driven zero-click behavior breaks the link between visibility and traffic (Seer Interactive, 2024). Users complete research tasks on results pages, which lowers CTR even as exposure grows. Treating this pattern as a loss misreads how search now works.

A practical GEO measurement approach combines several indicators. Teams track citation frequency across priority topics, monitor branded mentions in generated answers, and review how these signals relate to downstream outcomes. Analysis should focus on patterns across topics rather than movement for individual pages.

This approach complements existing analytics. Sessions and conversions still matter for outcomes. GEO metrics explain how content supports those outcomes earlier in the process, without relying on clicks as the only signal.

What Forward SEO Teams Are Doing Now

Leading SEO teams are adjusting practices to align with generative search behavior. They are changing how they plan content, collaborate internally, and evaluate success. Their actions reflect clear changes in how AI-driven search systems retrieve and cite information.

One visible change involves planning around topics instead of isolated keywords. SEO teams map topics as collections of related entities and questions. Each topic includes definitions, processes, comparisons, and implications. This structure supports retrieval across many related queries. Keyword research still informs demand, but topic maps guide execution.

Investment also moves toward evergreen explanatory content. Forward teams prioritize pages that explain core concepts clearly and remain relevant over time. These pages serve as reference points that systems can cite repeatedly. Updates focus on clarity and completeness rather than frequent rewrites. Stable explanations support consistent retrieval signals.

Collaboration expands beyond SEO roles. Content teams align with subject-matter experts, product leaders, and customer-facing staff to ensure explanations reflect real use cases. This alignment improves accuracy and depth. It also supports consistent terminology across marketing, documentation, and support content.

Search Engine Journal emphasizes the importance of crawl access and content clarity for AI-driven search (Search Engine Journal, 2025). SEO teams review how bots access content, ensure key pages remain indexable, and remove barriers that limit retrieval. They also review formatting to make answers easier to extract.

Industry commentary also shows growing agreement that GEO builds on existing SEO foundations instead of replacing them (Search Engine Journal, 2026). Technical hygiene, site structure, and content quality still matter. The difference lies in emphasis. Teams prioritize explanation quality, entity clarity, and topic coverage because these factors affect citation.

Forward SEO teams also adjust reporting practices. They supplement ranking and traffic reports with citation tracking and brand mention reviews. These reports focus on topic-level trends rather than page-level volatility. Teams use these insights to guide content expansion and refinement.

These changes reflect a broader shift in thinking. SEO teams move from chasing positions to supporting understanding. They design content that systems can retrieve, compare, and reuse. Over time, this approach builds durable visibility as generative search expands.

Forward SEO teams recognize that search now rewards clarity, consistency, and coverage. Their practices align with how AI systems assemble answers and how users consume information. By adapting early, these teams position their content to remain visible as search continues to change.

Practical GEO Readiness Checklist for B2B Websites

This checklist translates the report’s analysis into preparation steps that B2B content teams can apply across existing sites. Each item aligns with how generative systems retrieve, evaluate, and cite content. No single change guarantees inclusion, but together these steps improve retrieval readiness.

GEO Readiness Checklist for B2B Websites

Confirm key pages are not blocked by robots.txt, auth walls, or heavy scripts; review crawl logs

Give each concept/product/standard one stable name and clear definition; use consistently across all pages

Place definitions near section tops; repeat in similar form across related pages

Add author names, role descriptions, and source citations; link to primary sources

Each heading introduces a question/concept → direct explanation → example; no reliance on surrounding context

Update for clarity/accuracy without frequently changing core definitions or terminology

Crawl access for AI bots

Ensure that content remains accessible to search crawlers and related retrieval systems. Pages that block bots through robots.txt, authentication walls, or aggressive script loading reduce the chance of retrieval. Review crawl logs and indexing reports to confirm that key explanatory pages load cleanly and return stable responses. Google advises keeping content accessible so AI-powered search experiences can surface and cite it (Google Search Central, 2025).

Entity clarity across pages

Define core entities clearly and consistently. Each important concept, product, standard, or role should have a stable name and a clear definition. Use the same terms across guides, product pages, and documentation. When entities appear with multiple labels or shifting definitions, systems struggle to align content across sources. Clear entity naming helps retrieval systems connect related explanations.

Consistent definitions and terminology

Place definitions near the top of sections where concepts first appear. Repeat definitions in a similar form across related pages. This repetition supports consistency signals across retrieval results. OpenAI documentation notes that structured, well-defined text improves retrieval quality in synthesis tasks (OpenAI, n.d.). Consistency across pages increases the chance that content aligns with other sources during answer assembly.

Clear authorship and sourcing

Identify who wrote the content and what expertise they bring. Author names, role descriptions, and source citations help systems and users evaluate reliability. Link to primary sources when referencing standards, research, or platform behavior. Clear sourcing supports confidence during retrieval and citation.

Section-level completeness

Design each section to stand on its own. Each heading should introduce a specific question or concept, followed by a direct explanation and, where helpful, an example. Avoid relying on surrounding context to complete meaning. Retrieval systems evaluate sections independently, so completeness at the section level improves reuse.

Stable updates and version control

Update content to improve clarity or accuracy without changing core definitions frequently. Major changes in terms or framing make content less consistent across retrieval cycles. Stable explanations allow systems to recognize patterns over time.

This checklist supports gradual improvement. B2B content teams can apply it page by page, starting with core topic hubs and evergreen guides. Over time, these practices increase the likelihood that their content appears within generative answers.

Conclusion

GEO has changed how visibility works in search. Generative search systems now pull information and build explanations directly on results pages. This affects how users find answers and how brands get seen during research. Search still connects people with information, but it does so differently.

Traditional SEO focused on ranking pages and capturing clicks. Generative systems focus on retrieving meaning and citing sources that support an answer. Visibility now depends on whether content explains a topic clearly at the section level and aligns with common explanations across the web. Position matters less when answers appear before links.

For B2B companies, this shift requires a change in emphasis. SEO programs must prepare content for retrieval and reuse. SEO teams benefit from focusing on entities, topic coverage, and clear structure. These elements help systems identify, compare, and cite content across many related questions.

Measurement must also adjust. Clicks and rankings remain useful for some queries, especially navigational ones. They do not describe influence in generative results. Citation presence, branded mentions, and assisted influence provide a better view of how content supports buyer understanding earlier in the process.

B2B teams that adapt early gain steady visibility as generative search expands. They build content that systems trust and reuse. They align structure, terminology, and sourcing across pages. Over time, this approach supports presence across a wide range of questions without relying on exact phrasing or position.

SEO continues to matter. Its role now focuses on retrieval readiness and the quality of explanations. B2B teams that understand this change and adjust their practices position their content to remain visible as search behavior evolves.

GEO is the practice of optimizing content so that AI-powered search systems can retrieve, evaluate, and cite it within generated answers. Unlike traditional SEO, which focuses on ranking in a list of links, GEO focuses on whether your content explains a topic clearly enough for AI systems to include it as a source in on-page answers like Google’s AI Overviews.

AI Overviews significantly reduce organic CTR. Data from Seer Interactive shows that organic click-through rate dropped from 1.41% to 0.64% on queries where AI Overviews appeared. However, brands that are cited within an AI Overview actually see higher engagement, organic CTR rose from 0.74% to 1.02% when a brand was included as a source in the generated answer.

Generative search systems retrieve content based on meaning, not exact keyword matches. A page that repeats a query phrase but doesn’t fully explain the topic contributes little to a generated answer. AI systems need definitions, process steps, comparisons, and examples to assemble useful responses. Keywords still help identify demand, but they work better as topic planning signals than as direct optimization targets.

Ranking measures where a page appears in a list of results and depends on clicks for success. Citation measures whether a page is referenced as a source inside a generated answer. Both models operate at the same time, but they measure different things. A page can rank well and receive no citations, or rank lower and still influence understanding by being cited in an AI Overview. For informational and research queries, citation is becoming the stronger visibility signal.

B2B teams should supplement traditional traffic metrics with citation frequency, branded mentions in generated answers, and assisted influence, whether AI Overview appearances correlate with downstream actions like branded searches or demo requests. Tracking these signals at the topic level rather than the page level gives a more accurate picture of how content supports buyer research in a zero-click environment.

Resources

- Gartner, Inc. (n.d.). B2B Buying: How Top CSOs and CMOs Optimize the Journey. https://www.gartner.com/en/sales/insights/b2b-buying-journey

- Google Search Central. (2023, February 8). Google Search's guidance about AI-generated content. https://developers.google.com/search/blog/2023/02/google-search-and-ai-content

- Google Search Central. (2023, August 8). Changes to HowTo and FAQ rich results. https://developers.google.com/search/blog/2023/08/howto-faq-changes

- Google Search Central. (2025, May 21). Top ways to ensure your content performs well in Google's AI experiences on Search. https://developers.google.com/search/blog/2025/05/succeeding-in-ai-search

- OpenAI. (n.d.). Retrieval. OpenAI Platform Documentation.https://platform.openai.com/docs/guides/retrieval

- OpenAI. (n.d.). Web search. OpenAI Platform Documentation. https://platform.openai.com/docs/guides/tools-web-search

- Search Engine Journal. (2025, July 30). How to win in generative engine optimization (GEO)https://www.searchenginejournal.com/win-generative-engine-optimization-peecai-spa/550612/

- Search Engine Journal. (2026, January 21). A little clarity on SEO, GEO, and AEOhttps://www.searchenginejournal.com/a-little-clarity-on-seo-geo-and-aeo/565522/

- Seer Interactive. (2024). Why 2020's SEO KPIs won't work in 2024 in a GenAI & Data Scarce world. https://www.seerinteractive.com/insights/why-2020s-seo-kpis-wont-work-in-2024-in-a-genai-data-scarce-world

- Seer Interactive. (2025). Google AI Overview Study - SEO & PPC CTR impact. https://www.seerinteractive.com/insights/ctr-aio/

- Search Engine Land. (2025, April 21). New data: Google AI Overviews are hurting click-through rates. https://searchengineland.com/google-ai-overviews-hurt-click-through-rates-454428

- Search Engine Land. (2025, February 5). Google organic and paid CTRs hit new lows:

- Search Engine Land. (2025, June 5). Zero-click searches rise, organic clicks dip: Report. https://searchengineland.com/zero-click-searches-up-organic-clicks-down-456660

- Search Engine Land. (2025, June). New Google AI Overviews data: Search clicks fell 30% in last year. https://searchengineland.com/google-ai-overviews-search-clicks-fell-report-455498